Search

Results

Automatic Quality Assessment of Source Code Comments: The JavadocMiner

Abstract

An important software engineering artefact used by developers and maintainers to assist in software comprehension and maintenance is source code documentation. It provides insights that help software engineers to effectively perform their tasks, and therefore ensuring the quality of the documentation is extremely important. Inline documentation is at the forefront of explaining a programmer's original intentions for a given implementation. Since this documentation is written in natural language, ensuring its quality needs to be performed manually. In this paper, we present an effective and automated approach for assessing the quality of inline documentation using a set of heuristics, targeting both quality of language and consistency between source code and its comments. We apply our tool to the different modules of two open source applications (ArgoUML and Eclipse), and correlate the results returned by the analysis with bug defects reported for the individual modules in order to determine connections between documentation and code quality.

An important software engineering artefact used by developers and maintainers to assist in software comprehension and maintenance is source code documentation. It provides insights that help software engineers to effectively perform their tasks, and therefore ensuring the quality of the documentation is extremely important. Inline documentation is at the forefront of explaining a programmer's original intentions for a given implementation. Since this documentation is written in natural language, ensuring its quality needs to be performed manually. In this paper, we present an effective and automated approach for assessing the quality of inline documentation using a set of heuristics, targeting both quality of language and consistency between source code and its comments. We apply our tool to the different modules of two open source applications (ArgoUML and Eclipse), and correlate the results returned by the analysis with bug defects reported for the individual modules in order to determine connections between documentation and code quality.

Welcome to Dr. René Witte's Homepage

Welcome to my personal homepage. Here you can find information on my current research as well as my publications and other activities. More research-related information is published on semanticsoftware.info, where you can also contact me. For the socially networked, I'm also on Google+, LinkedIn, Xing and Twitter.

Connecting Wikis and Natural Language Processing Systems

Abstract

We investigate the integration of Wiki systems with automated natural language processing (NLP) techniques. The vision is that of a "self-aware" Wiki system reading, understanding, transforming, and writing its own content, as well as supporting its users in information analysis and content development. We provide a number of practical application examples, including index generation, question answering, and automatic summarization, which demonstrate the practicability and usefulness of this idea. A system architecture providing the integration is presented, as well as first results from an initial implementation based on the GATE framework for NLP and the MediaWiki system.

General Terms: Design, Human Factors, Languages

Keywords: Self-aware Wiki System, Wiki/NLP Integration

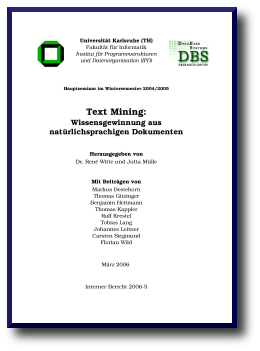

Technical Report on Text Mining (in German)

Submitted by rene on Wed, 2007-01-31 09:04.A new technical report on Text Mining (in German) is now available. This is a collection of reports written by students within a Hauptseminar, which was given by yours truly and Jutta Mülle at Universität Karlsruhe, Germany.

Enhancing the OpenOffice.org Word Processor with Natural Language Processing Capabilities

Abstract

Today's knowledger workers are often overwhelmed by the vast amount of readily available natural language documents that are potentially relevant for a given task. Natural language processing (NLP) and text mining techniques can deliver automated analysis support, but they are often not integrated into commonly used desktop clients, such as word processors. We present a plug-in for the OpenOffice.org word processor Writer that allows to access any kind of NLP analysis service mediated through a service-oriented architecture. Semantic Assistants can now provide services such as information extraction, question-answering, index generation, or automatic summarization directly within an end user's application.

A Semantic Wiki Approach to Cultural Heritage Data Management

Abstract

Providing access to cultural heritage data beyond book digitization and information retrieval projects is important for delivering advanced semantic support to end users, in order to address their specific needs. We introduce a separation of concerns for heritage data management by explicitly defining different user groups and analyzing their particular requirements. Based on this analysis, we developed a comprehensive system architecture for accessing, annotating, and querying textual historic data. Novel features are the deployment of a Wiki user interface, natural language processing services for end users, metadata generation in OWL ontology format, SPARQL queries on textual data, and the integration of external clients through Web Services. We illustrate these ideas with the management of a historic encyclopedia of architecture.

Providing access to cultural heritage data beyond book digitization and information retrieval projects is important for delivering advanced semantic support to end users, in order to address their specific needs. We introduce a separation of concerns for heritage data management by explicitly defining different user groups and analyzing their particular requirements. Based on this analysis, we developed a comprehensive system architecture for accessing, annotating, and querying textual historic data. Novel features are the deployment of a Wiki user interface, natural language processing services for end users, metadata generation in OWL ontology format, SPARQL queries on textual data, and the integration of external clients through Web Services. We illustrate these ideas with the management of a historic encyclopedia of architecture.

Empowering the Enzyme Biotechnologist with Ontologies

Introduction

The FungalWeb Ontology is a knowledge representation vehicle designed to integrate information relevant to industrial applications of enzymes. The ontology integrates information from established sources and supports complex queries to the instantiated FungalWeb knowledge base. The ontology represents prototype Semantic Web technology customized to the domain of industrial enzymes with a focus on enzyme discovery, commercial enzyme products and vendors, and the industrial applications and benefits of industrial enzymes. Using a series of application scenarios we demonstrate the utility of this 'Semantic Web' infrastructure to the enzyme biotechnologist.

Fuzzy Set Theory-Based Belief Processing for Natural Language Texts

Introduction

The growing number of publicly available information sources makes it impossible for individuals to keep track of all the various opinions on one topic. The goal of our artificial believer system we present in this paper is to extract and analyze opinionated statements from newspaper articles.

Beliefs are modeled with a fuzzy-theoretic approach applied after NLP-based information extraction. A fuzzy believer models a human agent, deciding what statements to believe or reject based on different, configurable strategies.

Text Mining: Wissensgewinnung aus natürlichsprachigen Dokumenten

(This webpage is about a technical report on Text Mining, written in German. Try Google Translate for an English version.)

Interner Bericht 2006-5, Fakultät für Informatik, Universität Karlsruhe (TH), Germany

Herausgegeben von René Witte und Jutta Mülle

ISSN 1432-7864

200 Seiten, 75 Abbildungen

Algorithms and semantic infrastructure for mutation impact extraction and grounding

Abstract

Background

Mutation impact extraction is a hitherto unaccomplished task in state of the art mutation extraction systems. Protein mutations and their impacts on protein properties are hidden in scientific literature, making them poorly accessible for protein engineers and inaccessible for phenotype-prediction systems that currently depend on manually curated genomic variation databases.

Results

We present the first rule-based approach for the extraction of mutation impacts on protein properties, categorizing their directionality as positive, negative or neutral. Furthermore protein and mutation mentions are grounded to their respective UniProtKB IDs and selected protein properties, namely protein functions to concepts found in the Gene Ontology. The extracted entities are populated to an OWL-DL Mutation Impact ontology facilitating complex querying for mutation impacts using SPARQL. We illustrate retrieval of proteins and mutant sequences for a given direction of impact on specific protein properties. Moreover we provide programmatic access to the data through semantic web services using the SADI (Semantic Automated Discovery and Integration) framework.

Conclusion

We address the problem of access to legacy mutation data in unstructured form through the creation of novel mutation impact extraction methods which are evaluated on a corpus of full-text articles on haloalkane dehalogenases, tagged by domain experts. Our approaches show state of the art levels of precision and recall for Mutation Grounding and respectable level of precision but lower recall for the task of Mutant-Impact relation extraction. The system is deployed using text mining and semantic web technologies with the goal of publishing to a broad spectrum of consumers.