Search

Results

Tutorial: Applications for the Semantic Web

Description

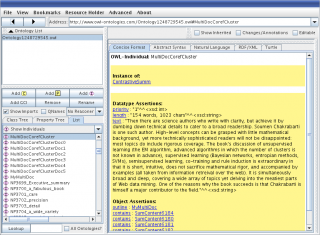

The Semantic Web vision is considered the next generation of the Web that enables sharing data, resources and knowledge between parties that belong to different organizations, different cultures, and/or different communities.  Ontologies and rules play the main role in the Semantic Web for publishing community vocabularies and policies, for annotating resources and for turning Web applications into inference-enabled collaboration platforms. After a short introduction into the basic concepts, standards, and tools of the Semantic Web, we present how today's Semantic Web tools, languages, and techniques can be used in various application. We first start from the use of the Semantic Web technologies for providing online educators with feedback about how their students use online courses in learning management systems. Next, we demonstrate the use of the Semantic Web technologies and text mining techniques to improve software development process and software maintenance. Finally, we explain the use of the Semantic Web technologies in multimedia-enhanced applications.

Ontologies and rules play the main role in the Semantic Web for publishing community vocabularies and policies, for annotating resources and for turning Web applications into inference-enabled collaboration platforms. After a short introduction into the basic concepts, standards, and tools of the Semantic Web, we present how today's Semantic Web tools, languages, and techniques can be used in various application. We first start from the use of the Semantic Web technologies for providing online educators with feedback about how their students use online courses in learning management systems. Next, we demonstrate the use of the Semantic Web technologies and text mining techniques to improve software development process and software maintenance. Finally, we explain the use of the Semantic Web technologies in multimedia-enhanced applications.

A Quality Perspective of Evolvability Using Semantic Analysis

Abstract

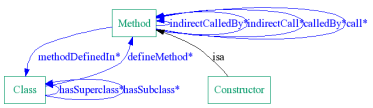

Software development and maintenance are highly distributed processes that involve a multitude of supporting tools and resources. Knowledge relevant to these resources is typically dispersed over a wide range of artifacts, representation formats, and abstraction levels. In order to stay competitive, organizations are often required to assess and provide evidence that their software meets the expected requirements. In our research, we focus on assessing non-functional quality requirements, specifically evolvability, through semantic modeling of relevant software artifacts. We introduce our SE-Advisor that supports the integration of knowledge resources typically found in software ecosystems by providing a unified ontological representation. We further illustrate how our SE-Advisor takes advantage of this unified representation to support the analysis and assessment of different types of quality attributes related to the evolvability of software ecosystems.

ERSS at TAC 2008

Abstract

An Automatically Generated Summary

An Automatically Generated Summary

ERSS 2008 attempted to rectify certain issues of ERSS 2007. The improvements to readability, however, do not re?ect in signi?cant score increases, and in fact the system fell in overall ranking. While we have not concluded our analysis, we present some preliminary observations here.

Creating a Fuzzy Believer to Model Human Newspaper Readers

Abstract

We present a system capable of modeling human newspaper readers. It is based on the extraction of reported speech, which is subsequently converted into a fuzzy theory-based representation of single statements. A domain analysis then assigns statements to topics. A number of fuzzy set operators, including fuzzy belief revision, are applied to model different belief strategies. At the end, our system holds certain beliefs while rejecting others.

Processing of Beliefs extracted from Reported Speech in Newspaper Articles

Abstract

The growing number of publicly available information sources makes it impossible for individuals to keep track of all the various opinions on one topic. The goal of our artificial believer system presented in this paper is to extract and analyze statements of opinion from newspaper articles.

Beliefs are modeled using a fuzzy-theoretic approach applied after NLP-based information extraction. A fuzzy believer models a human agent, deciding what statements to believe or reject based on different, configurable strategies.

LockMe! for PalmOS

![]() Current Version is 1.1.

Current Version is 1.1.

Works on PalmOS 2.x and higher

Developed under Linux with gcc, pilrc and CoPilot.

(This web page is about an old PalmOS security utility of mine, LockMe! Although no longer being maintained, the tool and its source code are still available.)

Description

LockMe! periodically locks your Palm, starting at a specified time.

Tutorial: Introduction to Text Mining

Tutorial Description

Do you have a lack of information? Or do you rather feel overwhelmed by the sheer amount of (online) available content, like emails, news, web pages, and electronic documents? The rather young field of Text Mining developed from the observation that most knowledge today - more than 80% of the data stored in databases - is hidden within documents written in natural languages and thus cannot be automatically processed by traditional information systems.

Text Mining, "also known as intelligent text analysis, text data mining or knowledge-discovery in text (KDT), refers generally to the process of extracting interesting and non-trivial information and knowledge from unstructured text." Text Mining is a highly interdisciplinary field, drawing on foundations and technologies from fields like computational linguistics, database systems, and artificial intelligence, but applying these in new and often unconventional ways.

Architektur von Fuzzy-Informationssystemen

(This web page is about my book, "Architecture of Fuzzy Information Systems", which is written in German. You can try a Google translation.)

Architektur von Fuzzy-Informationssystemen

von René Witte

ISBN 3-8311-4149-5

330 Seiten, 82 Abbildungen

Copyright © 2002 René Witte

Alle Rechte liegen beim Autor.

Bezugsquellen

Inhaltsbeschreibung

Informationssysteme gehen heute aufgrund der eingesetzten Modelle und Technologien davon aus, daß die verwalteten Daten immer präzise, sicher und konsistent sind. Doch die Wirklichkeit sieht anders aus: Informationen sind tatsächlich oft ungenau, vage, unsicher oder inkonsistent.

Insbesondere bei komplexen Informationssystemen, die eine möglichst naturgetreue Abbildung der Realität erreichen sollen, möchte man aber diese sogenannten Imperfektionen nicht verlieren, sondern sie vielmehr explizit repräsentieren, um daraus für die Entwicklung und den Anwender Vorteile zu schöpfen: eine Bank etwa hat großes Interesse an einer korrekten Beschreibung der Kreditwürdigkeit eines Kunden, ein Umweltinformationssystem muß glaubwürdige Daten über die Umweltbelastung einer Region vermitteln, ebenso ein Verkehrsleitsystem über mögliche Staugefahr. Business-to-Business Marktplätze brauchen Informationen über die Zuverlässigkeit von Geschäftspartnern, Elektronische Bibliotheken über die Relevanz aufgespürter Textstellen.

Zur Modellierung solcher unscharfer und unsicherer Daten läßt sich die sogenannte Fuzzy-Theorie verwenden, die bereits in vielen anderen Bereichen, wie der Steuer- und Regelungstechnik, erfolgreich industriell eingesetzt wird. Für Informationssysteme existierte jedoch bisher keine systematische Vorgehensweise zur Erweiterung existierender Modelle, Technologien und Architekturen, die kompatibel mit etablierten Standards bleibt und die neuen Möglichkeiten in orthogonaler Weise einbettet. Im vorliegenden Buch, das auf der Dissertation des Autors beruht, wird nun erstmals ein komplettes Architekturmodell für die Entwicklung von Fuzzy-Informationssystemen vorgestellt. Nach einer Einführung in die notwendigen Grundlagen aus der Fuzzy-Theorie wird ein für Informationssysteme geeignetes Modell formal aufgebaut, und es wird gezeigt, wie dieses Modell mit gängigen objektorientierten Sprachen realisiert werden kann. Für die Systementwicklung schließlich wird eine passende Referenzarchitektur vorgestellt, die sich an aktuellen, mehrstufigen Client/Server-Architekturen orientiert.

Darüber hinaus bietet das Buch dem Praktiker zwei konkrete Anwendungsbeispiele, ein Fuzzy-Entscheidungshilfesystem und ein Fuzzy-Textanalysesystem, anhand derer die Entwicklung von Fuzzy-Anwendungen detailliert beschrieben wird.

Semantic Assistants – User-Centric Natural Language Processing Services for Desktop Clients

Abstract

Semantic Assistants Workflow OverviewToday's knowledge workers have to spend a large amount of time and manual effort on creating, analyzing, and modifying textual content. While more advanced semantically-oriented analysis techniques have been developed in recent years, they have not yet found their way into commonly used desktop clients, be they generic (e.g., word processors, email clients) or domain-specific (e.g., software IDEs, biological tools). Instead of forcing the user to leave his current context and use an external application, we propose a ``Semantic Assistants'' approach, where semantic analysis services relevant for the user's current task are offered directly within a desktop application. Our approach relies on an OWL ontology model for context and service information and integrates external natural language processing (NLP) pipelines through W3C Web services.

Semantic Assistants Workflow OverviewToday's knowledge workers have to spend a large amount of time and manual effort on creating, analyzing, and modifying textual content. While more advanced semantically-oriented analysis techniques have been developed in recent years, they have not yet found their way into commonly used desktop clients, be they generic (e.g., word processors, email clients) or domain-specific (e.g., software IDEs, biological tools). Instead of forcing the user to leave his current context and use an external application, we propose a ``Semantic Assistants'' approach, where semantic analysis services relevant for the user's current task are offered directly within a desktop application. Our approach relies on an OWL ontology model for context and service information and integrates external natural language processing (NLP) pipelines through W3C Web services.

Attributions

Abstract

We present here the outline of an ongoing research effort to recognize, represent, and interpret attributive constructions such as reported speech in newspaper articles. The role of reported speech is attribution: the statement does not assert some information as `true' but attributes it to some source. The description of the source and the choice of the reporting verb can express the reporter's level of confidence in the attributed material.